In November 2019, Arvind Narayanan, Associate Professor of Computer Science at Princeton University, gave a talk at the Massachusetts Institute of Technology (MIT) titled How to Recognize AI Snake Oil. In it, he argues that much of what is being sold as Artificial Intelligence (AI) is snake oil, in that it does not and cannot work. Legal departments will have a difficult time discerning whether AI solutions are worthwhile or not.

He’s not alone in his assessment. Gary Marcus, a Vancouver-based AI entrepreneur and author, said that “when a team exaggerates what’s happened, the public gets the message that we’re really close to AI, and you know, sooner or later the public is going to realize that’s not true.”

It is true that much of what is being sold as AI in everything from an electric toothbrush to a smartphone technically is not AI at all. Actual artificial intelligence is still in the realm of science fiction, with the best estimate of when true AI will be “ready” being set at 2030, and more conservative estimates at 2060.

What is being currently marketed as AI is not, in fact, AI. Rather, it is machine learning. The MIT Technology Review defines machine learning as follows.

“Machine-learning algorithms use statistics to find patterns in massive amounts of data. And data, here, encompasses a lot of things—numbers, words, images, clicks, what have you. If it can be digitally stored, it can be fed into a machine-learning algorithm.”

-What is Machine Learning? Karen Hao, MIT Technology Review, November 2018

Gary Marcus cited similar concerns. “A lot of us are worried that we might reach another trough of disillusionment because there’s been so much hype about AI that we can’t really live up to the expectations right now.”

This is a far cry from the promise of artificial intelligence, which implies that a computer is actually delivering human-like judgement.

A practical example of AI solution snake oil in HR: Video assessment of candidate applications

Dr. Narayanan’s problem with AI snake oil does not lie in the wrong use of words to describe a technology, although he does point this out in his talk. The issue lies in the things being sold as “AI” being non-functional for the uses that they claim. In a corporation, for example, “AI” technology can be used to screen job applicants. In an example he gives in his talk, one company assesses if a candidate is suitable for a job by automatically assessing candidate’s application videos.

A 2019 study at Cornell University reveals, in Dr. Narayanan’s words, that “this product is essentially a random number generator.” And this is not science fiction. Various Canadian companies, including Air Canada, are actively using AI-based video analysis technologies for candidate selection and recruitment. Springlaw, a Canadian employment law blog, argues that use of AI in hiring can lead to inherent biases which may be actionable, and this is a good enough reason not to jump on board with these new technologies just yet.

Where AI solutions are working: Perception technology solutions

Dr. Narayanan acknowledges that there are some areas where “AI” technologies do work for their purpose. He categorizes these as “Perception” solutions. Content identification, such as music or image recognition, does work well. As does facial recognition, medical diagnoses from scans, and speech to text. Yet, there are some privacy concerns around facial recognition as evidenced in the recent suspension of Clearview AI’s contract with the Royal Canadian Mounted Police (RCMP) and its termination of offering these technologies in Canada due to privacy concerns.

However, this is where Dr. Narayanan’s belief in technologies being sold as AI ends.

Automating judgement: Not quite there yet

The technologies being sold to legal departments to automate basic tasks, such as content detection, fall under Dr. Narayanan’s automating judgment category. While he is optimistic about these technologies showing improvement, he states that “AI will never be perfect at these tasks because they involve judgment and reasonable people can disagree about the correct decision.” One can argue that this would be especially true about an “AI” solution that automates searching for court decisions or other items which will be used for legal purposes.

Predicting social outcomes: Not at all ready for deployment

According to him, Dr. Narayanan’s third category of solutions, which aim to predict social outcomes, are not ready for deployment. He argues that this is where most of the “snake oil” resides. Predicting job performance, as with the HR video assessment example, falls into this category.

There are also criminal justice solutions sold that claim to predict criminal recidivism. Bail decisions are currently made in some jurisdictions based on these technologies. People are being turned away at borders because of other solutions that claim to predict terrorism risk accurately. Dr. Narayanan argues that we seem to have suspended our collective common sense where AI is involved, which would tell us that this use of technology is patently wrong.

Essentially, if you wouldn’t buy snake oil products to use for medicinal purposes, you shouldn’t be buying snake oil products to solve important problems at your organization such as who to hire or to find data to back up corporate legal advice. Additionally, AI tools give malicious hackers additional areas to exploit, which can raise cybersecurity concerns.

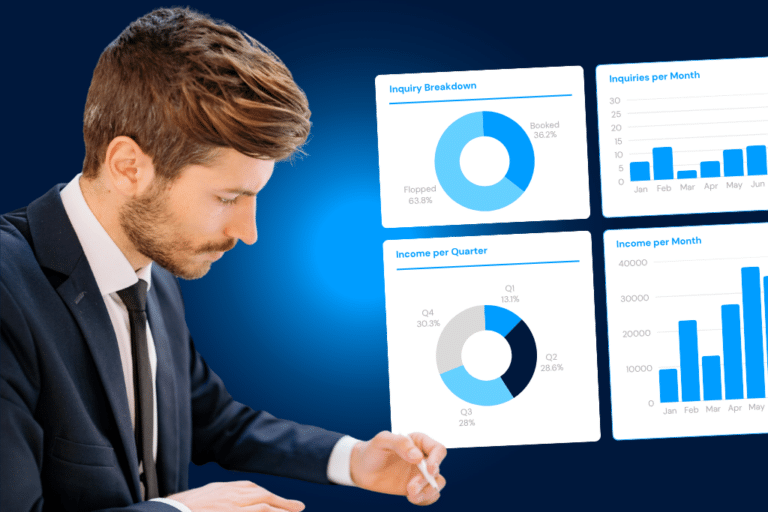

DiliTrust is a corporate legal management solution that does not use AI in any of its components, keeping your company data secure. Purpose-built by legal experts who have worked in corporate legal departments, this solution helps you intelligently manage everything in your department including contracts, mergers & acquisitions, real estate holdings, and intellectual property. Contact us today for a demonstration.