By Rupali Patel Shah, Head of Legal Solutions, DiliTrust

As we closed out the first quarter of 2026, I took a walk down memory lane and revisited some of my earlier writing on AI as a disruptor—both in the legal profession and across society more broadly.

For the most part, the predictions hold. Particularly around the increasing importance of information governance, which is no longer theoretical—it’s showing up as a real operational need inside organizations. And I am certainly more versed in AI than I was a year ago. Most people I speak with are also far more conversant than they were in 2025.

But one thing hasn’t changed: I was skeptical about AI in 2025, and three months into 2026, I’m still skeptical. And I’m not alone.

After spending the last few months speaking with in-house counsel, technologists, and legal operations leaders, one thing is clear: while the excitement around AI continues at a fever pitch, so do the demands for accountability, governance, and ethical guardrails. Early adopters are no longer satisfied with experimentation—they are asking for measurable “AI dividends.”

If 2025 was the year of “AI for everything, everywhere,” 2026 is shaping up to be the year of a much more important set of questions:

Why AI?

When AI?

How much of it?

For whom—and critically, by whom?

Level set 1: AI cannot be held accountable, therefore AI should not be making decisions

Let’s start with something that feels obvious but somehow keeps getting lost in the noise: AI cannot be held accountable-not legally, not ethically, not operationally. It has no stake in the outcome, no judgment, no consequence-bearing capacity. And that matters, because decision-making, real decision-making, requires all of those things. So, if AI cannot be accountable, it should not be making decisions. Full stop.

Level set 2: AI is a tool, not a replacement

AI is a powerful tool. But it is still just that—a tool. Not unlike a hammer or a fork, AI is useful in the right context, but its value is entirely dependent on how and why it is used.

And here’s where we need to be very clear: AI cannot replace humans. It cannot operate without human intervention. It cannot make decisions without human input. It cannot exist in any meaningful way without people and processes surrounding it.

The real risk is that organizations start behaving as if humans are no longer necessary. You see it in subtle ways at first, like less investment in training and emphasis on expertise, more reliance on tools to “figure it out.” And then you see it more explicitly: assuming the tool can carry the load and reduce headcount too quickly.

And this abdication, this overestimation of the value and utility of a tool that requires human intervention, is the number one reason technology implementations fail.

Think about it, you spend all your time training the technology, and not nearly enough time training the people who have to use it. Then, when chaos ensues — because not enough people are trained on the tool, and the ones who are trained can’t drive adoption on their own (and your head explodes) — the organization calls it a technology failure and someone loses their job.

This isn’t new, we’ve seen this pattern play out with every major wave of technology:

- Overinvest in the tool

- Underinvest in people and process

- Expect transformation

- Get disappointment

And now we’re doing it again—with AI.

In legal, in particular, the pressure to drive efficiency is real. AI absolutely has a role to play in that. But the way we’re currently using it is adding to the problems instead of solving them. We’re deploying AI in ungoverned, scattered ways, adopting multiple tools across teams with little coordination and making decisions based on individual preferences—what works for one person, one workflow, one moment.

Call it experimentation if you want, but a lot of it looks more like “vibe coding” than enterprise strategy. That’s not transformation, that’s chaos with a user interface.

The real AI dividend

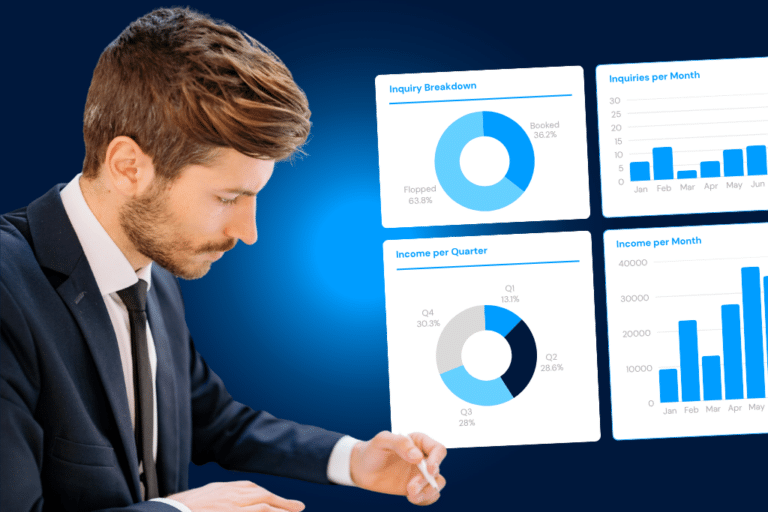

Now, none of this is to say AI isn’t valuable. When used appropriately, it can be incredibly powerful. In fact, AI is exceptional at recognizing patterns and surfacing insights quickly from large volumes of data. It can take in more information than any individual human could process and make that information usable. It is a force multiplier.

And that’s where we’re seeing real AI Dividends, i.e. the actual ROI on AI investment, both tangible and intangible—particularly in legal—through rapid, bespoke analytics and improved access to information.

But notice what all of that leads to: better-informed humans, not autonomous systems making decisions. So how do you get better informed humans? Governance, of course.

AI without governance doesn’t deliver dividends. In fact, it can make things worse. Good outcomes don’t come from tools, they come from defined processes, high-quality, governed data and people trained to use both. That’s the foundation and it’s also where legal departments should be leading. Because at its core, legal is about consistency, accountability, and defensibility.

Technology is a tool. With the right user and for the right purpose, it can be incredibly powerful. But overestimating what a tool can do is dangerous, because when we do that, we start to ignore the things that actually make technology work:

People.

Process.

Governance.

You need all three. You cannot shortcut them. And AI is no different.

Better decisions, not fewer humans

If we get this right, AI can absolutely help us make better decisions—faster, with more confidence, and with better outcomes. If we get it wrong, we won’t just miss the upside, we’ll create new risks—ones that are harder to detect, harder to explain, and harder to defend.

So the question isn’t whether AI will be part of the future of legal and business. It will be. The real question is whether we use it to augment human judgment—or try to replace it.

Because one of those paths leads to better decisions and the other leads to failure we could have seen coming.